Features & Architecture

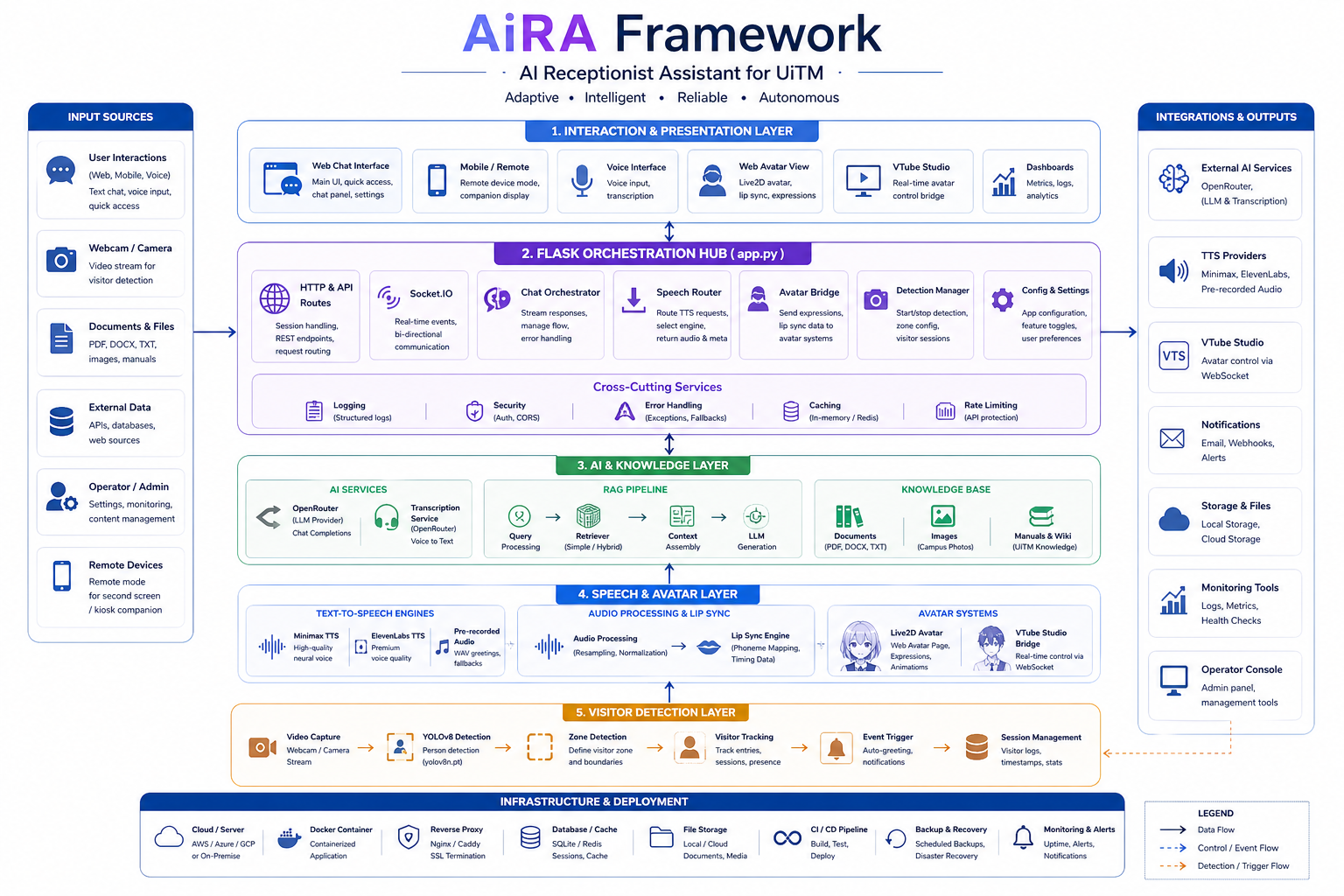

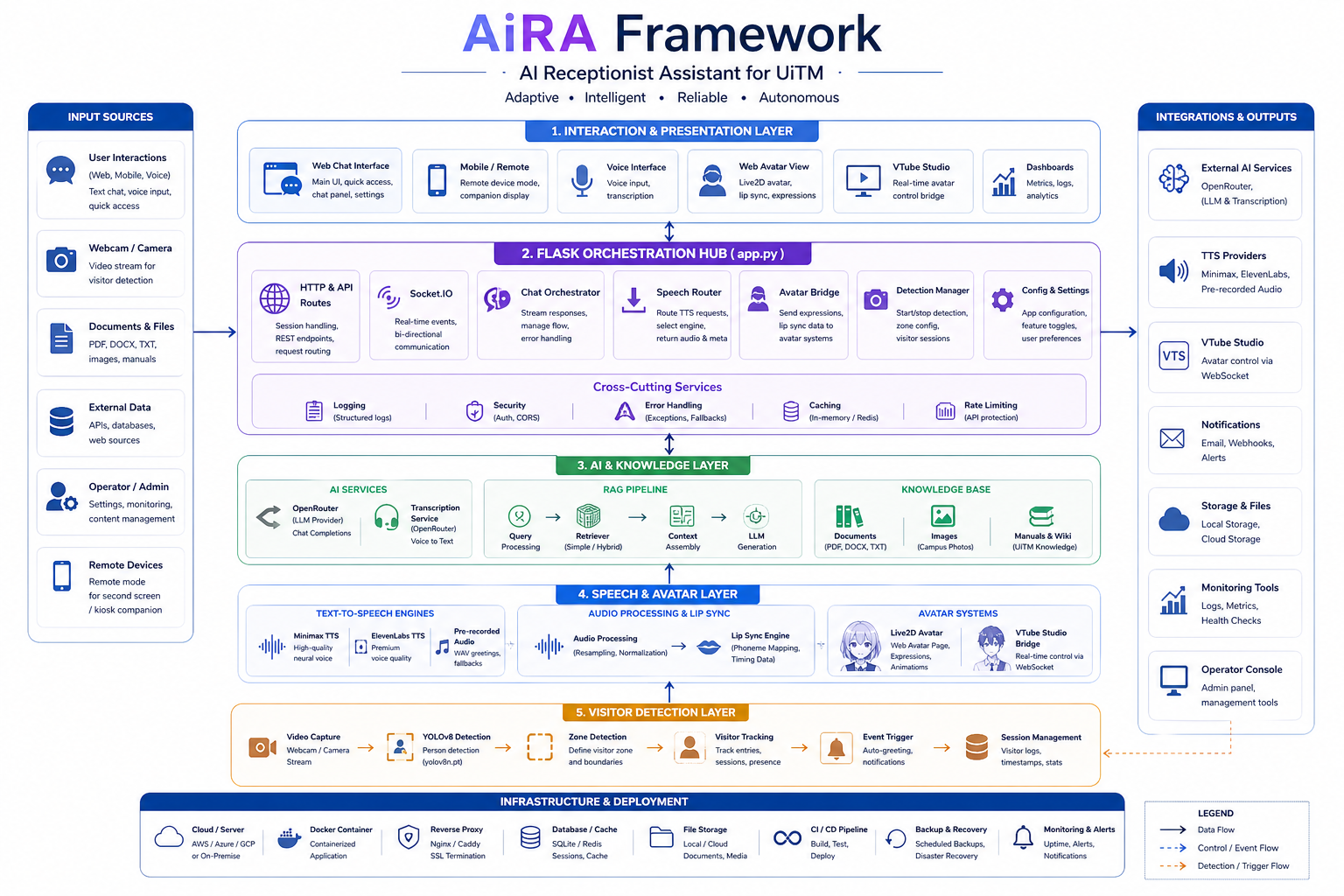

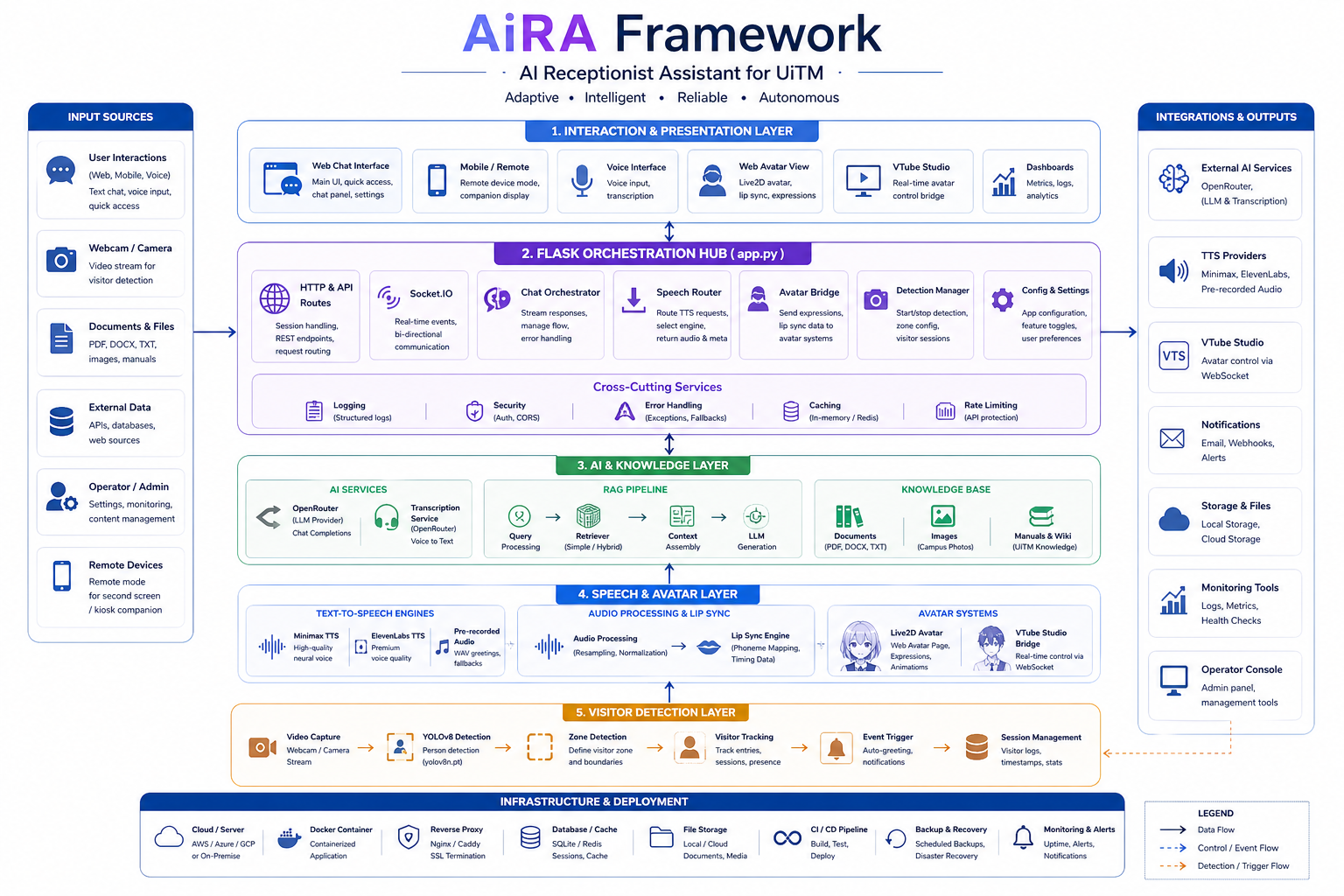

A modular, production-grade framework that orchestrates six integrated layers — from real-time voice and avatar interaction to AI-powered knowledge retrieval and computer vision detection.

Complete Architecture at a Glance

Every component, data flow, and integration point mapped across the full AiRA stack.

Figure 1 — AiRA Framework V3: Frontend Experience, Backend Hub, AI & Knowledge, Speech & Avatar, Visitor Detection, and Packaging & Deployment layers.

From Question to Answer in Three Steps

AiRA processes every interaction through a deterministic pipeline — multimodal input, RAG-powered reasoning, and multi-channel output — ensuring consistency, safety, and speed.

Input

The user engages through the kiosk touchscreen, web chat, mobile browser, voice command, or directly on the avatar display. The system automatically detects presence via YOLOv8, identifies the user's language preference, and classifies intent before routing to the appropriate handler.

Multi-ModalProcessing

The query enters the AI & Knowledge layer where the local RAG engine retrieves approved documents and images, ranks sources by relevance, applies configurable safety and content rules, and prepares a grounded, citation-backed answer. Service APIs and escalation workflows are invoked when needed.

RAG-PoweredOutput

The response is delivered through multiple channels simultaneously: clear text in the chat interface, natural spoken audio via Minimax or ElevenLabs TTS, animated lip-sync and gestures on the Live2D avatar, plus actionable elements like links, forms, tickets, queue directions, or staff escalation.

Multi-ChannelSix Integrated Layers

Each layer is independently testable and replaceable, yet tightly orchestrated through the Backend Hub for seamless real-time operation.

1. Frontend Experience

The public-facing interface: main conversational chat panel, quick-access command bar, browser-based voice input with microphone permissions, user settings panel, remote assistance mode, and visitor detection control dashboard. Built with vanilla HTML5, CSS3, and JavaScript on Socket.IO for responsive, real-time updates without page reloads.

HTML5 · CSS3 · JavaScript · Socket.IO2. Backend Hub

The Flask-powered central nervous system: HTTP route definitions, server-sent event streaming for real-time chat responses, Socket.IO namespace management for bidirectional communication, speech processing request routing, avatar animation bridge commands, and the complete visitor detection lifecycle from trigger to action.

Flask · Socket.IO · REST API3. AI & Knowledge Layer

The intelligence core: OpenRouter API integration for LLM text generation and speech transcription, a local Retrieval-Augmented Generation engine that indexes approved documents for fast semantic search, and support for both document text and image-based retrieval. All knowledge stays on-premise with no external data leakage.

OpenRouter · RAG · NLP4. Speech & Avatar Layer

The embodiment layer: dual text-to-speech engine support — UiTM Voice V1 via Minimax and Voice V2 via ElevenLabs for natural, expressive audio output. Pre-generated WAV greeting files for instant responses. Real-time lip-sync analysis maps phonemes to avatar mouth shapes. Live2D Cubism SDK drives the animated character, while VTube Studio integration enables professional-grade avatar control with gestures, expressions, and idle animations.

TTS · Live2D · VTube Studio5. Visitor Detection Layer

The environmental awareness layer: YOLOv8 real-time person detection running on local camera feeds. A configurable zone editor defines interaction boundaries within the camera view. Session tracking links each detected visitor to a conversation context. Auto-greeting events fire when a person enters the interaction zone, with an optional MJPEG stream for remote monitoring.

YOLOv8 · OpenCV · MJPEG6. Packaging & Deployment

The distribution layer: PyInstaller build specifications for creating standalone Windows executables, auto-generated API documentation from route decorators, step-by-step setup guides for fresh installations, and an installer/launcher configuration using NSSM for running AiRA as a resilient Windows service that auto-restarts on failure.

PyInstaller · NSSM · WindowsNatural Voice. Living Avatar. Real-Time Presence.

AiRA speaks with two distinct voice profiles — UiTM Voice V1 powered by Minimax for rapid deployment scenarios, and Voice V2 via ElevenLabs for studio-grade expressiveness with emotional tone control. Both engines stream audio in real time so the user never waits for the full response to finish generating.

The Live2D Cubism SDK drives a fully rigged 2D avatar character with dynamic bone physics, breathing idle cycles, and context-aware expressions. AiRA's lip-sync engine maps phoneme data to viseme mouth shapes frame-by-frame, creating the illusion of natural speech. For professional studio setups, the VTube Studio bridge forwards expression and motion commands so streamers and content creators can use their existing VTS character models as the AiRA avatar.

- Dual TTS engines — Minimax (V1) and ElevenLabs (V2)

- Real-time phoneme-to-viseme lip synchronization

- Live2D Cubism SDK with idle animations and expressions

- VTube Studio bridge for external avatar control

- Pre-generated WAV greetings for zero-latency welcome

- Gesture triggers mapped to conversation context

Know When Someone Arrives — Before They Speak.

AiRA's YOLOv8-based person detection runs continuously on a local camera feed, identifying when a visitor enters the kiosk area. The system uses a configurable interaction zone editor — define polygon regions within the camera's field of view where detection triggers engagement. False positives from background movement are filtered automatically.

Each detection event creates a visitor session with a unique identifier, timestamp, and duration tracking. When a person enters the interaction zone and lingers beyond the configurable threshold, an auto-greeting event fires: the avatar turns to face the visitor, plays a welcome message, and opens a conversation. An optional MJPEG stream provides a live view for remote administrators to monitor foot traffic and system health.

- YOLOv8 real-time person detection engine

- Configurable polygon interaction zones

- Visitor session tracking with dwell-time analysis

- Auto-greeting triggers on zone entry

- Optional MJPEG monitoring stream

- False-positive filtering for background motion

Configuration Groups & Variables

Each system layer exposes a dedicated configuration group. All values are hot-reloadable without restarting the server.

| Group | Key Variables | Description |

|---|---|---|

| OpenRouter | OPENROUTER_API_KEY, OPENROUTER_MODEL, OPENROUTER_MAX_TOKENS, OPENROUTER_TEMPERATURE |

API credentials, model selection (default: cognitivecomputations/dolphin3.0-r1-mistral-24b:free), token budget, and creativity control for LLM text generation. |

| Flask | FLASK_HOST, FLASK_PORT, FLASK_DEBUG, SECRET_KEY |

Server bind address, listening port, debug mode toggle, and session signing key for cookie security. |

| Minimax | MINIMAX_API_KEY, MINIMAX_GROUP_ID, MINIMAX_VOICE_ID, MINIMAX_SPEED, MINIMAX_VOLUME |

V1 voice engine credentials, group identifier, voice profile ID, speech rate multiplier, and output volume level. |

| ElevenLabs | ELEVENLABS_API_KEY, ELEVENLABS_VOICE_ID, ELEVENLABS_MODEL, ELEVENLABS_STABILITY, ELEVENLABS_SIMILARITY |

V2 voice engine credentials, voice profile selection, model variant (eleven_multilingual_v2), and voice consistency parameters. |

| RAG | RAG_INDEX_PATH, RAG_CHUNK_SIZE, RAG_TOP_K, RAG_SIMILARITY_THRESHOLD, RAG_MAX_SOURCES |

Local vector index storage path, document chunking granularity, number of retrieved passages, minimum relevance cutoff, and maximum cited sources per answer. |

| VTS | VTS_PORT, VTS_PLUGIN_NAME, VTS_PLUGIN_DEVELOPER, VTS_AUTH_TOKEN |

VTube Studio WebSocket port, registered plugin identity for authentication handshake, and session authorization token. |

| Detection | DETECTION_CAMERA_ID, DETECTION_CONFIDENCE, DETECTION_IOU_THRESHOLD, DETECTION_ZONE_POINTS, DETECTION_DWELL_SECONDS |

Camera device index, YOLOv8 confidence cutoff, intersection-over-union overlap threshold, polygon zone vertex coordinates, and minimum linger duration before auto-greeting fires. |